[MediaPipe] Face Detection 얼굴 감지

AI, ML, DL 2025. 2. 11. 19:58 |반응형

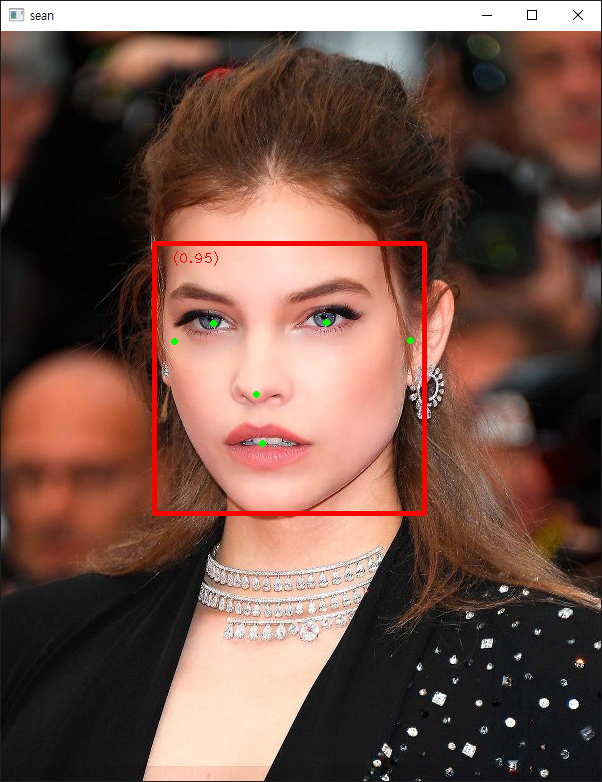

MediaPipe를 사용해 얼굴(눈, 코, 입, 귀)을 감지해 보자.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

|

from typing import Tuple, Union

import math

import numpy as np

import cv2

import mediapipe as mp

from mediapipe.tasks import python

from mediapipe.tasks.python import vision

MARGIN = 10 # pixels

ROW_SIZE = 10 # pixels

FONT_SIZE = 1

FONT_THICKNESS = 1

TEXT_COLOR = (255, 0, 0) # red

def _normalized_to_pixel_coordinates(

normalized_x: float, normalized_y: float, image_width: int,

image_height: int) -> Union[None, Tuple[int, int]]:

"""Converts normalized value pair to pixel coordinates."""

# Checks if the float value is between 0 and 1.

def is_valid_normalized_value(value: float) -> bool:

return (value > 0 or math.isclose(0, value)) and (value < 1 or

math.isclose(1, value))

if not (is_valid_normalized_value(normalized_x) and

is_valid_normalized_value(normalized_y)):

# TODO: Draw coordinates even if it's outside of the image bounds.

return None

x_px = min(math.floor(normalized_x * image_width), image_width - 1)

y_px = min(math.floor(normalized_y * image_height), image_height - 1)

return x_px, y_px

def visualize(image, detection_result) -> np.ndarray:

"""Draws bounding boxes and keypoints on the input image and return it.

Args:

image: The input RGB image.

detection_result: The list of all "Detection" entities to be visualize.

Returns:

Image with bounding boxes.

"""

annotated_image = image.copy()

height, width, _ = image.shape

for detection in detection_result.detections:

# Draw bounding_box

bbox = detection.bounding_box

start_point = bbox.origin_x, bbox.origin_y

end_point = bbox.origin_x + bbox.width, bbox.origin_y + bbox.height

cv2.rectangle(annotated_image, start_point, end_point, TEXT_COLOR, 3)

# Draw keypoints

for keypoint in detection.keypoints:

keypoint_px = _normalized_to_pixel_coordinates(keypoint.x, keypoint.y,

width, height)

color, thickness, radius = (0, 255, 0), 2, 2

cv2.circle(annotated_image, keypoint_px, thickness, color, radius)

# Draw label and score

category = detection.categories[0]

category_name = category.category_name

category_name = '' if category_name is None else category_name

probability = round(category.score, 2)

result_text = category_name + ' (' + str(probability) + ')'

text_location = (MARGIN + bbox.origin_x,

MARGIN + ROW_SIZE + bbox.origin_y)

cv2.putText(annotated_image, result_text, text_location, cv2.FONT_HERSHEY_PLAIN,

FONT_SIZE, TEXT_COLOR, FONT_THICKNESS)

return annotated_image

# Create an FaceDetector object.

base_options = python.BaseOptions(model_asset_path='blaze_face_short_range.tflite')

# https://ai.google.dev/edge/mediapipe/solutions/vision/face_detector

options = vision.FaceDetectorOptions(base_options=base_options)

detector = vision.FaceDetector.create_from_options(options)

# Load the input image.

image = mp.Image.create_from_file('face.jpg')

#cv_image = cv2.imread('face.jpg')

#image = mp.Image(image_format = mp.ImageFormat.SRGB,

# data = cv2.cvtColor(cv_image, cv2.COLOR_BGR2RGB))

# https://ai.google.dev/edge/api/mediapipe/python/mp/Image

# Detect faces in the input image.

detection_result = detector.detect(image)

# Process the detection result. In this case, visualize it.

image_copy = np.copy(image.numpy_view())

annotated_image = visualize(image_copy, detection_result)

rgb_annotated_image = cv2.cvtColor(annotated_image, cv2.COLOR_BGR2RGB)

cv2.imshow('sean', rgb_annotated_image)

cv2.waitKey(0)

|

blaze_face_short_range.tflite

0.22MB

소스를 입력하고 실행한다.

눈, 코, 입, 귀가 모두 정확히 감지 된다.

반응형

'AI, ML, DL' 카테고리의 다른 글

| [MediaPipe] Face Landmark Detection 얼굴 특징 감지 (1) | 2025.02.11 |

|---|---|

| [MediaPipe] Pose Landmark Detection 자세 특징 감지 (0) | 2025.02.11 |

| [MediaPipe] Hand Landmark Detection 손 특징 감지 (0) | 2025.02.11 |

| [MediaPipe] Object Detection 객체 감지 (1) | 2025.02.11 |

| [DL] Keras(TensorFlow) 관련 에러 해결 (0) | 2025.01.15 |